Governance

Shift-left data governance is the unlock for agentic AI

If governance starts after the agent is already in production, the architecture is upside down.

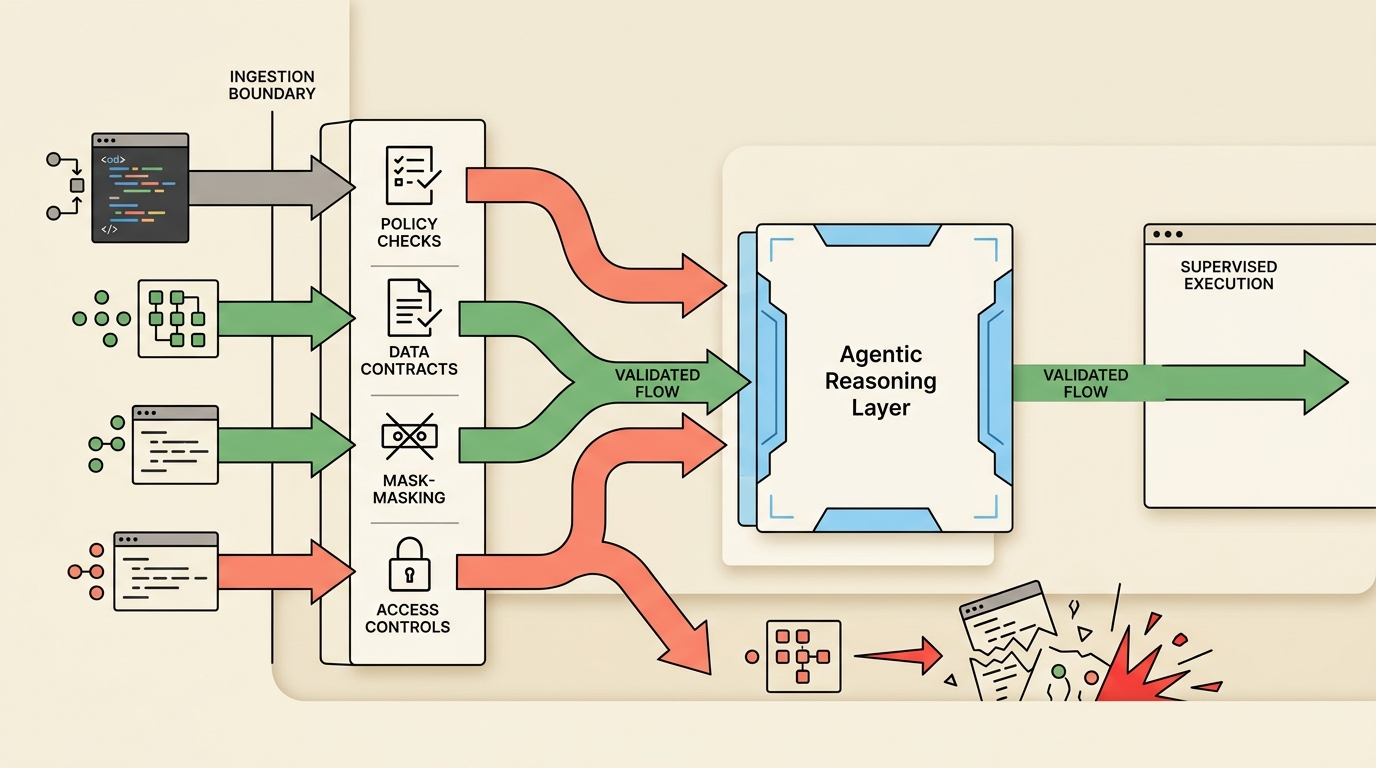

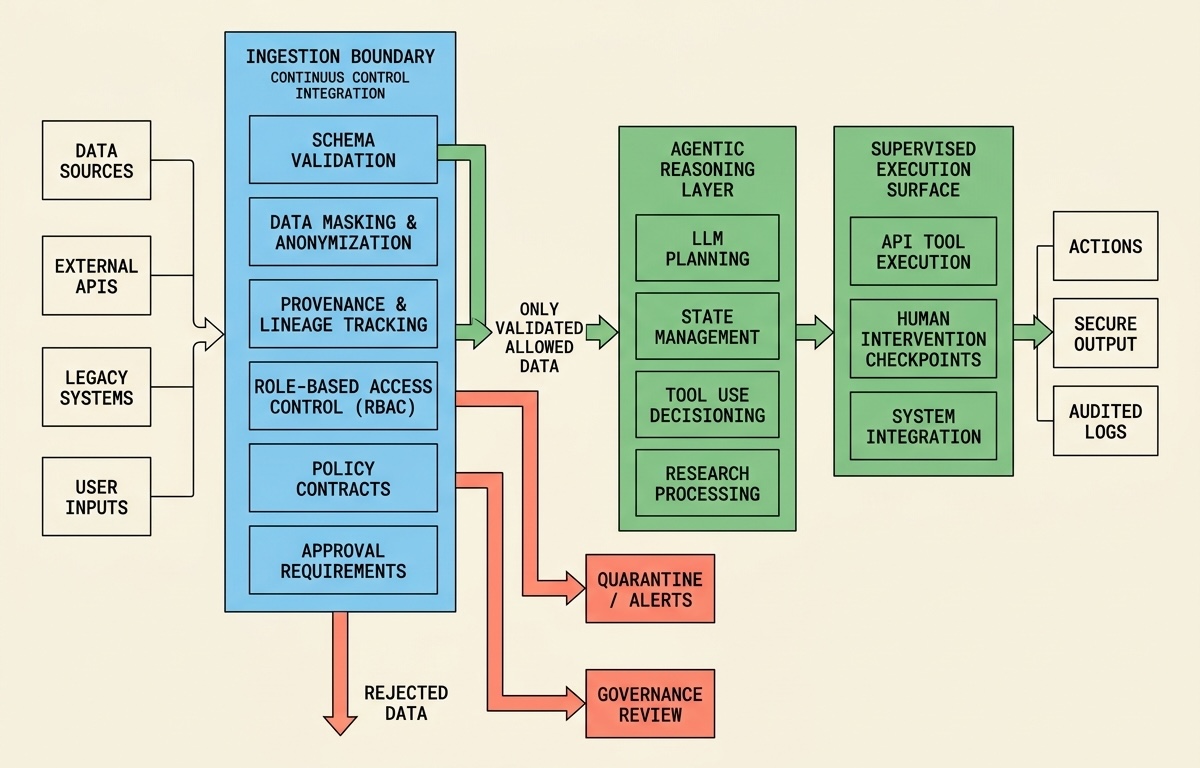

Governance has to start at ingestion

AI systems inherit the quality and safety of the data they receive. When contracts, masking, and validation happen late, teams end up debugging failures after the model has already produced a risky action or a low-trust recommendation.

Shift-left governance moves those checks upstream. Schemas, access policies, and quality thresholds become part of the integration boundary rather than a downstream reporting concern.

What to validate before launch

Start with field-level contracts, role-based access, and explicit provenance for the data used to reason or act. Then define what must be redacted, what requires approval, and what can never be touched by an autonomous path.

This is especially important in healthcare, finance, and infrastructure, where the cost of one bad assumption can be regulatory, operational, or reputational.

Why this speeds delivery rather than slowing it

Teams often assume governance is a brake. In practice, early policy design reduces rework because engineers know the exact boundaries they are building inside.

Good governance can make launches faster by eliminating ambiguity about what the system can see, do, and log from day one.