Operations

Explainable AI operations create trust before scale

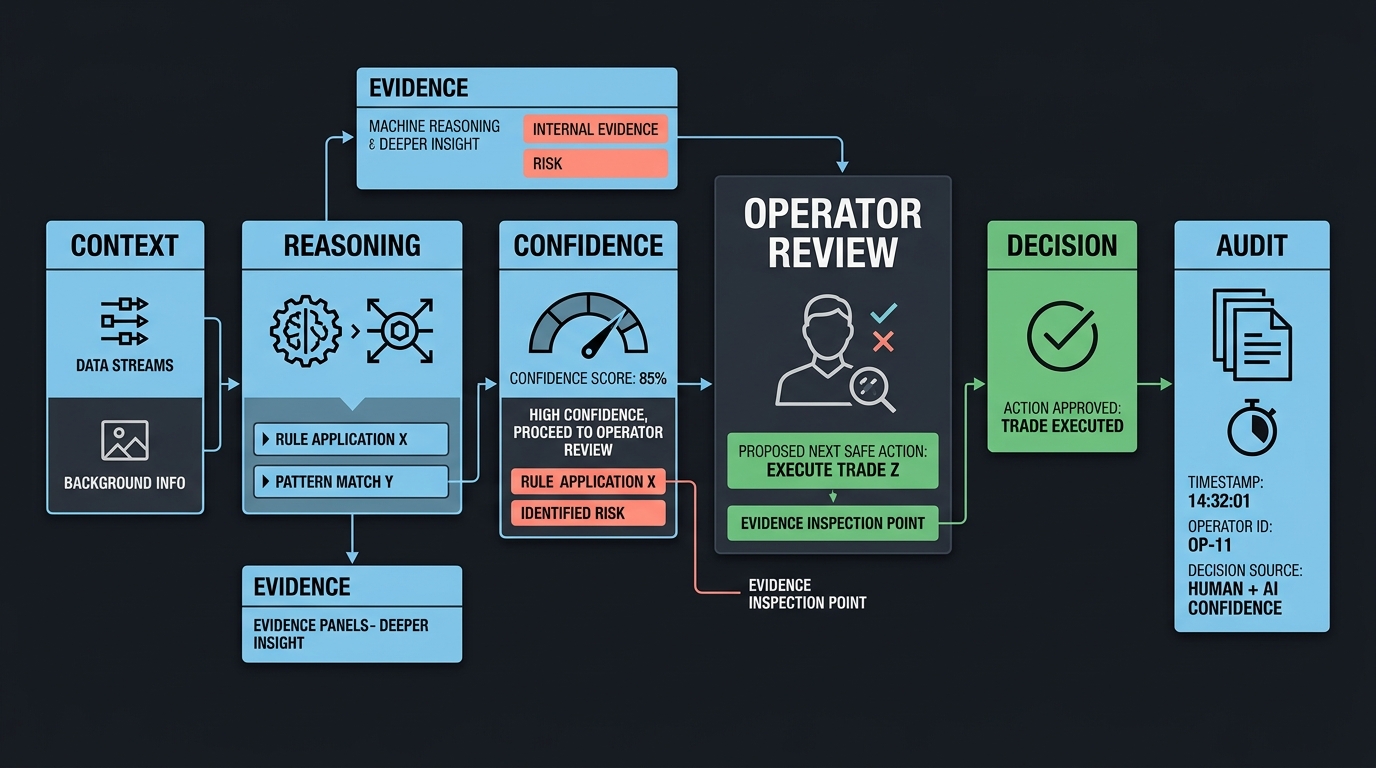

Operators do not need mystical intelligence. They need a system that shows its work and hands control back cleanly when confidence drops.

Reasoning is not the same as execution

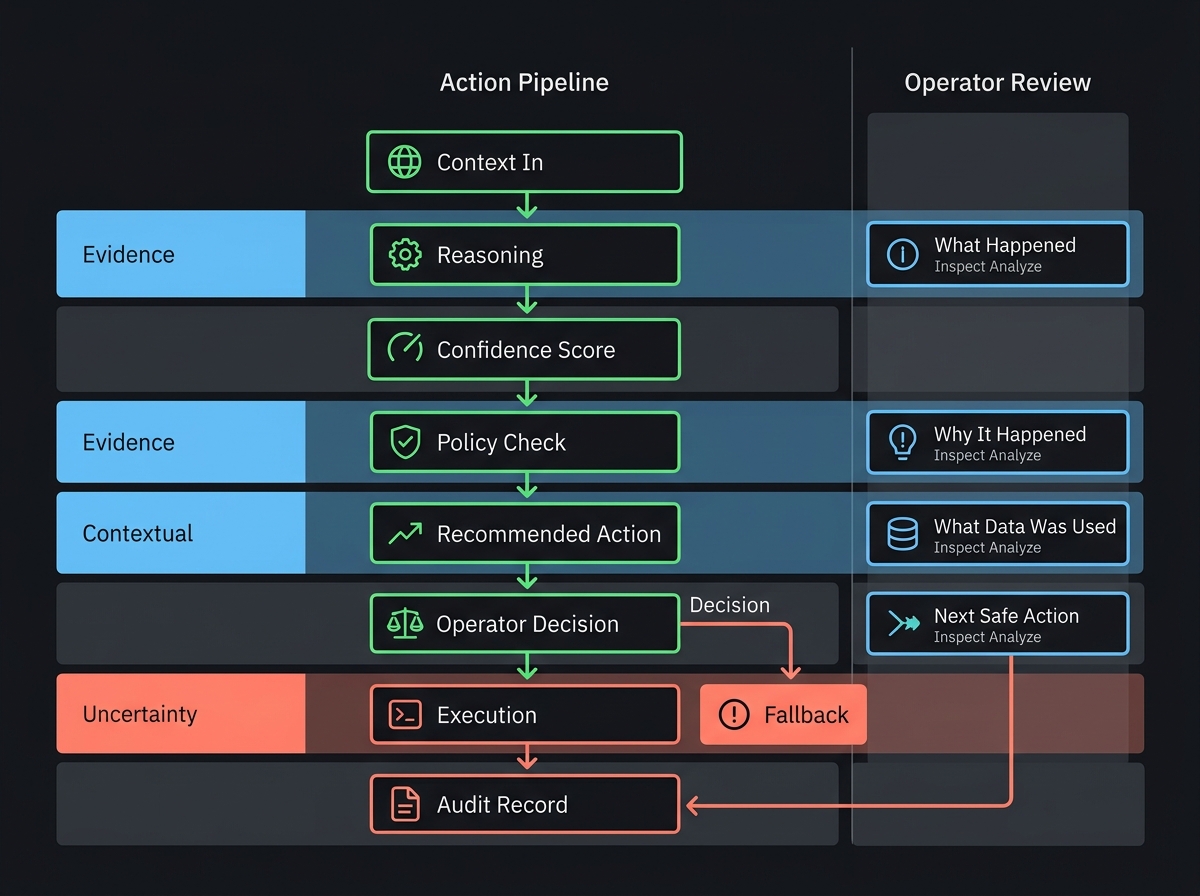

Large models are useful for synthesis, extraction, and recommendation. On their own, they are rarely enough to run sensitive workflows. Real production systems separate the model's reasoning layer from the execution layer that enforces policy and approvals.

That separation is what turns an interesting demo into an operationally trustworthy service.

What operators need to see

An operator should be able to answer four questions immediately: what happened, why it happened, what data was used, and what the next safe action is.

That means every AI action needs context, confidence, status, and a clear remediation path. If a reviewer cannot reconstruct the decision, the control plane is incomplete.

Scaling trust through supervision

The best launch pattern is supervised automation. Start with AI-generated recommendations, measure quality, then graduate specific actions into tighter automated paths once performance and guardrails are proven.

Trust compounds when teams can inspect the system, correct it quickly, and understand how it behaves under pressure.